How Twilio Protocols works

Protocols helps businesses automate several workflows and processes to maintain clean and consistent customer data, at scale.

-

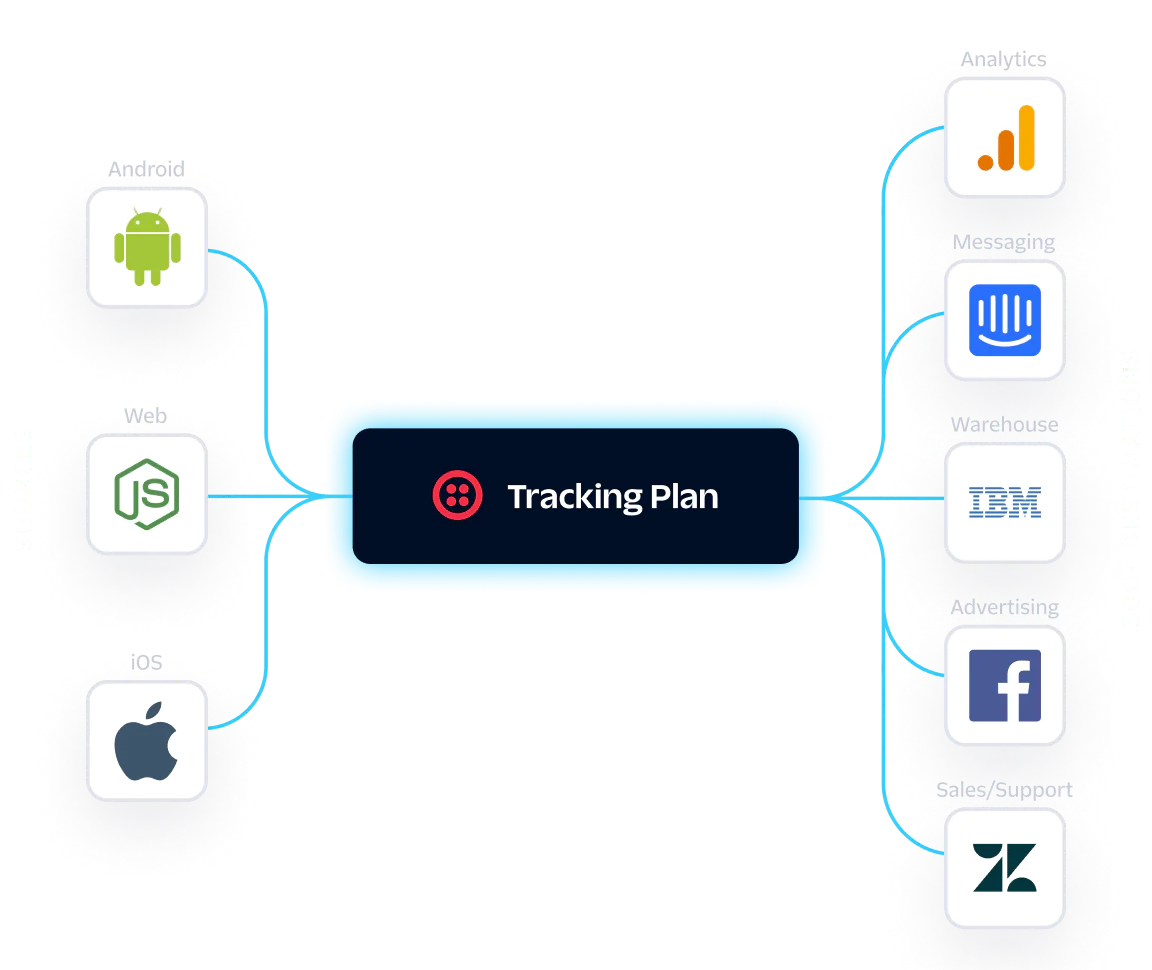

Create a shared data dictionary

Replace outdated spreadsheets and align teams throughout your company with a well-designed Tracking Plan.

-

Diagnose quality issues

Automate the QA process and always catch data quality violations—without wasting time manually testing your tracking code.

-

Maintain trust in your data

Enforce data standards with strict controls to block non-conforming events and ensure high-quality data over time, so you can be confident in your data.

Make data integrity your superpower

Protect customer data with proven best practices.

Protocols features

Access tools to scale your data quality management.

Connect all your customer data

Maintaining clean and consistent data is easy when you collect, unify, and activate all your first-party data in a single platform.

-

Replace outdated spreadsheets with a data spec that outlines the events and properties you intend to collect from your sources.

-

Review, resolve, and forward non-conforming events from all connected sources in one place.

-

Configure schema to selectively block events, properties, and traits at the source, or prevent them from passing through your CDP.

-

Generate strongly-typed analytics libraries based on your Tracking Plan and reduce implementation errors.

-

Apply transformations for total control over what data gets sent to downstream destinations.

-

In-app reporting and daily email digests

Get the information you need to quickly take action on every invalid event before your team accidentally uses bad data to make decisions.

-

Receive notifications when event volume anomalies or violation counts occur to know when your data collection is broken, missing, or incorrect.

-

Extend your workflow around customer data collection and activation with our Public API.

Start automating your data governance and watch it scale—fast

Explore documentation, an online course, and more to help you get started with Protocols.

Need help setting up Protocols?

Work with one of our trusted partners to get coding support or explore a pre-built customer data solution. View partners.

Taking control of your data quality is easy

Discover how Protocols can improve trust in your data, reduce time spent validating, and ultimately allow your business to grow faster.

FAQ

Data cleansing is the process of ensuring data is complete, accurate, and reliable so that it can be used for analysis, decision-making, and reporting. Data cleansing includes correcting outdated or missing information, applying standardized naming conventions to data entries for consistency, deduplicating events, recording data transformations for transparency, and more.

Without properly cleaning data, organizations run the risk of basing strategies and campaigns off inaccurate or misleading information. Duplicate data entries, incomplete fields, and inconsistent naming conventions are common culprits behind “bad data,” which can cost businesses millions of dollars each year in misguided insights and poor decision-making.

To build a strong data foundation, you need to pay close attention to the way you prioritize what data you’re going to be ingesting, the naming conventions for that data, and proactively aligning data collection with privacy. The first step to this is identifying outcome oriented data based on the business goals your organization wants to achieve with a CDP. You absolutely need to define these goals first if you want to track meaningful data with your CDP.

Once you’ve identified outcome-oriented events to measure, you can take that and build a Tracking Plan. A Tracking Plan gives you a consistent roadmap for what data is important, where it’s being tracked, and why you’re tracking it. For an in-depth breakdown on aligning people and processes around a tracking plan, we recommend reading the Customer Data Maturity Guide.

Protocols is priced based on the number of monthly tracked users (MTUs) you collect data on. For more details or to get a custom quote, contact our team.